Over the past few years, there have been discussions among the IP bar in Canada about the length of time that some decisions have been under reserve. During the Federal Courts’ Town Hall at last year’s CBA IP Day, a question was raised to the Chief Justice about whether steps were being taken to address perceived delays in judgment time.

Among the answers given was that there was insufficient publicly available data about how long judgments were taking and how to evaluate delays, if any. The bar was encouraged to provide further feedback and to organize in collecting data.

This article and hopefully the following series will aim to analyze data on decision turnaround times at the Federal Court. It is hoped that the presentation, analysis, and discussion of data will facilitate meaningful engagement by the bench and bar on improvements to the Federal Court system to the benefit of the public.

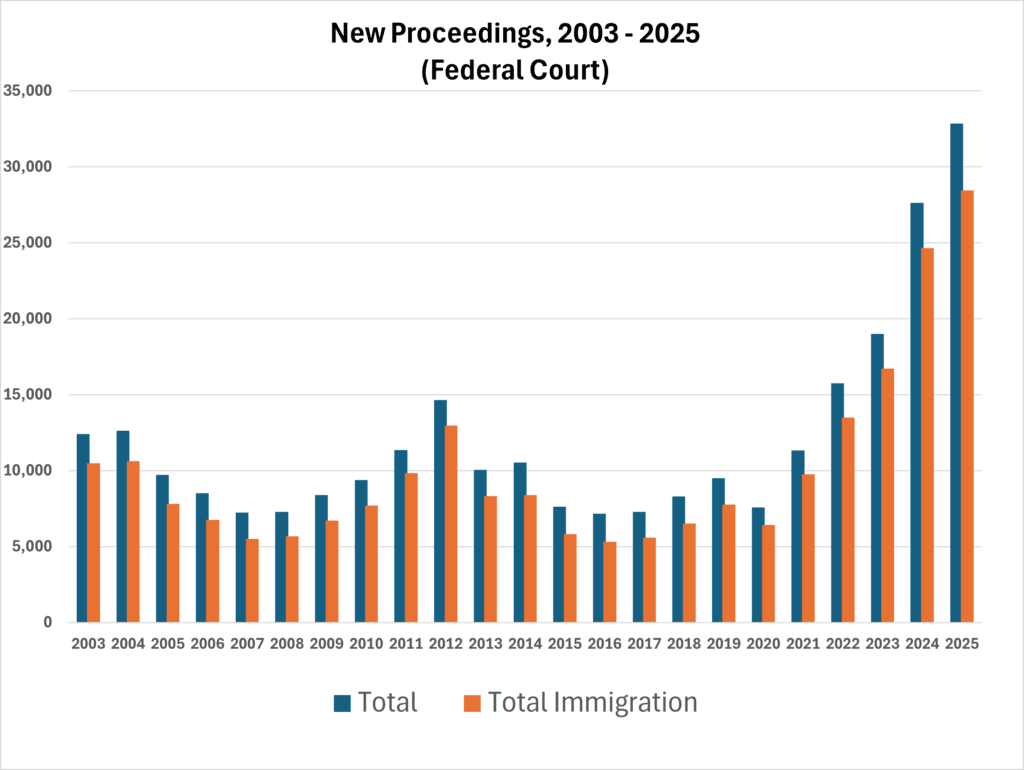

This article should not be taken as casting judgment on any member of the Court. As widely acknowledged across the legal world, courts are facing unprecedented case loads. In the Federal Court—particularly on immigration matters—filings have increased more than four-fold since 2020.1 2 The Federal Court and Canadian Bar Association have made submissions to the federal government identifying severe structural funding shortfalls and for increase in funding, which should be supported.3 The situation is dire across the country. Court backlogs have been identified as an access to justice crisis by the former Chief Justice of Canada, Beverley McLachlin.4

It is clear that courts alone have limited tools in improving service time and efficiency. Nonetheless, I believe that the gathering and discussion of relevant objective data will be helpful in informing relevant decision-makers of the issues. To date, I am not aware of any widely available data or studies on turnaround times (also described as delivery times or wait times), which are an important measure of access to justice.

Editorial note (April 29, 2026): This article and the associated graphs have been edited to remove individual name labels to emphasize that the focus of the analysis is to examine observable causes of variability in decision-writing time, rather than to discuss particular judges.

Editorial note (May 14, 2026): Some of the graphs have been updated to reflect updated data processing which filled in additional information in a few cases.

This page is best viewed on desktop devices to access the interactive graphics. It will work on mobile but there may be formatting artifacts in the graphs.

How long do judges take to make decisions?

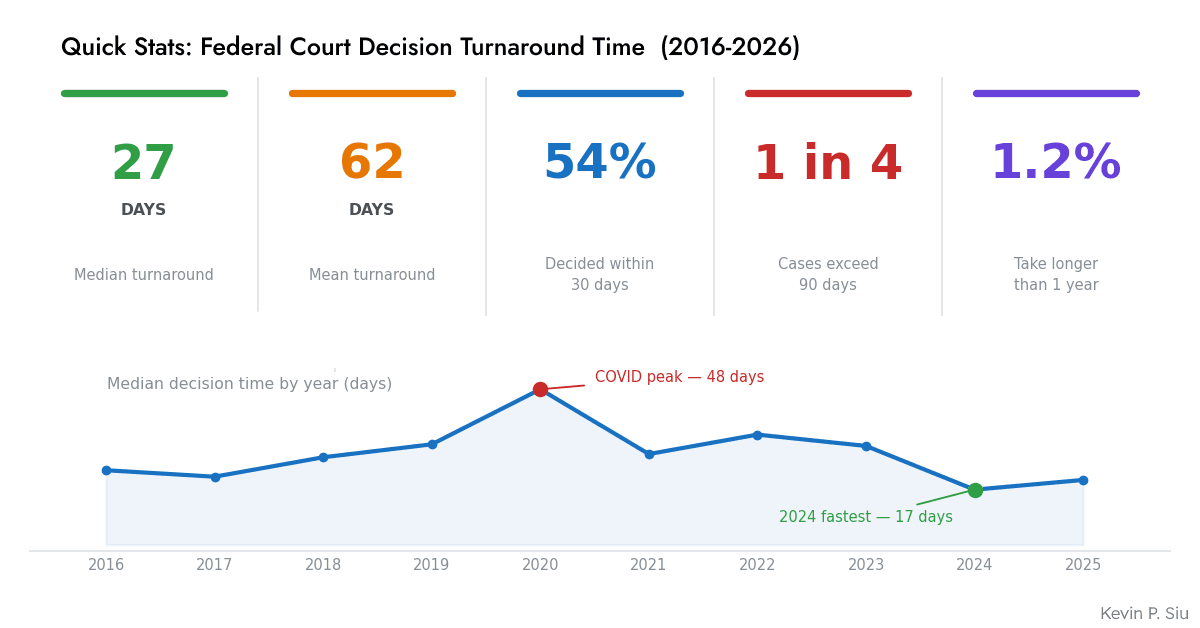

In the last ten years (2016-2026), the median published decision was issued 27 days after a hearing, while the mean was 62 days. Approximately 12,500-13,000 decisions were available in the data meeting relevant criteria from 2016-2026 (see below regarding data sources). This includes both interlocutory and final decisions.

The longest decision turnaround time in the data was 1202 days (3 years, 3 months, and 16 days). 330+ reported cases had a turnaround time of 0 days (same day decisions).

The stark difference between mean and median is attributable to the relatively large number of “quick” decisions compared to a long tail of “slow” decisions. The majority (52.1%) of decisions are issued within 28 days (in fact, about 20% of decisions are issued within 7 days). A “long tail” of delayed decisions pull up the mean significantly. (Figure 1)

This distribution is generally the same across all practice areas, but IP cases have a wider and flatter distribution, with some cases taking disproportionately long (30 decisions at 365+ days under reserve), thereby bringing up the mean significantly. In non-PMNOC intellectual property cases, the median time is 47 days while the mean time is 95 days. (Figure 2)

PMNOC decisions are a special case. The PMNOC Regulations prescribe a 24-month statutory stay against issuance of an NOC to the generic defendant in a PMNOC proceeding or until a court disposition, unless extended by court order. The Federal Court has a practice of scheduling PMNOC trials for a hearing about 21 months after the filing of the Statement of Claim, with a goal of having decisions issued within the 24-month period (implying an approximately 3-month decision turnaround time).

As can be seen from the data (although the sample size is smaller), PMNOC decisions have a median reserve time of 38 days and a mean reserve time of 71 days. While there are decisions that take significantly longer (note that this data does not distinguish between s. 6, s. 8, or s. 8.2 proceedings nor final/interlocutory orders), it appears that most decisions are rendered well within the Federal Court’s stated service standard. (The two listed decisions greater than 365 days are not even decisions on the merits – one relates to costs and another is a motion to amend.)5 (Figure 3)

The experience with PMNOC proceedings demonstrates that the Federal Court is able to issue complex patent decisions within a short period of time, and that the length of time under reserve is not simply because of “slow judges”. A reasonable assumption, however, is that judges are prioritizing issuance of PMNOC decisions within the relevant time period, potentially at the expense of slower decisions on non-PMNOC matters.

Trends

Over the past 10 years, the overall average decision turnaround time has not materially increased except for a COVID-related blip from 2020-2023. Indeed, if anything, the overall decision turnaround times are going down. (Figure 4)

This was a little unexpected, but one reason may be that the composition of cases has changed and faster decisions are being made in larger numbers (e.g. in immigration). This will be explored in a subsequent post. However, even when split across practice areas, there is a noticeable trend towards faster decisions. (Figure 5)

I suspect that there is more than one thing at play here, though it is not currently possible to prove. Anecdotally, the number of IP cases commenced and going to trial in the past two years has been dropping. Therefore, in the captured data, it may be that interlocutory decisions (which generally take less time than a final decision) are representing a higher share of IP cases, thereby lowering the average time for any decision but not necessarily time to final decision. This is admittedly speculative at the moment but will hopefully be explored further in a subsequent post.

How do judges differ in writing time?

There is a marked variability between individual judges’ decision-making time. The fastest (minimum 20 decisions) judge has a mean turnaround time of 7 days while the longest mean turnaround time is 211 days. (Figure 6)

An attempt was made to control for outlier effect by excluding the longest decision for each decision-maker and relying on median rather than mean. Notably, for several of the judges, median time was greater than mean time, suggesting that the averages were not being dragged up only by a few isolated cases.

Variability of decision-making time may be attributable to many factors, such as workload, case composition, assignment, complexity of cases, writing style and speed, or other non-measurable factors (such as health issues). I will attempt to explore these factors in subsequent posts. Based on preliminary data, counterintuitively, it does not appear that complexity or workload are significant contributing factors to the variability.

The consistency of turnaround time is also quite different across judges. In the box-and-whisker plot, tighter boxes indicate more consistency of decision-making time for any given judge, while wider boxes indicate less. There are some judges who are consistently fast (tighter distribution), while there are other judges who are only conditionally so depending on the case (wider box distributions).

What about IP decisions?

The aggregated data can be broken down by subject matter, so we can look at who the quickest decision-makers are in a particular area, such as IP. Since I practice in IP, this was of interest and was one of the motivations for doing this study. However, when I looked at the data, I hesitated to post it because of the acknowledged methodological limitations here (primarily, the non-distinction between interlocutory and final decisions, and use of only published decisions).

Further, when limiting the data to only IP cases, the sample size dropped dramatically. There were 860 reported IP cases between 2016-2026. In an attempt to filter out some noise, I limited the graph to judges with at least 5 IP decisions, which reduced the data to 642 represented decisions. Across 38 qualifying judges, the sample size for each judge ends up being quite small for some. The distribution of cases across the sample period (2016-2026) was not equal. The most prolific judge had 64 reported cases, the next three highest had from 30-40, and everyone else was below 30. Since the sample sizes are so small, no meaningful inferences should be drawn here without careful analysis. Nonetheless, “for curiosity” only, the IP-only rankings are below. (Figure 7)

Small Sample Size Alert: To (mis)borrow a sports analytics phrase, the data below should be treated with caution due to the small sample sizes involved.

Judges’ workload

One hypothesis is that judges’ workload affects decision turnaround time. The more a judge is overworked, the longer decisions take to issue. This hypothesis is complicated by at least three factors.

First, the available data can only measure output in terms of issued decisions rather than workload by case assignment directly. It is unclear to what extent judges are assigned cases for hearing which ultimately do not result in issued decisions (for example due to settlement or discontinuance). However, I consider it likely that this effect would be similar across all judges (implicitly assuming that judges encounter the same general rate of settlement, etc.), so that it should not materially affect relative comparison.

Second, workload is not likely to be uniform across practice areas. The amount of work that is required to hear and decide an immigration matter may be different than the amount of work required to hear and decide a patent matter. This is not a judgment on the nature of the case, but reflective of the amount of evidence and trial days that a judge must sift and sit through for each type of proceeding. This is harder to assess directly but may be inferable with a deeper analysis across practice areas.

Third, it is not necessarily clear that workload (as measured by output) should result in longer decisions. For some judges, it may be preferable to clear their dockets by issuing more (perhaps shorter) decisions more quickly, rather than hold onto a larger number of decisions under reserve.

The average judge issued about 30-33 reported decisions per year in the past three years.

While the Court’s overall output has increased in the last 10 years, the relative output of each judge has stayed fairly consistent. In terms of issued decisions within the dataset, there has not been a marked increase. Judges within our sample have issued about the same number of decisions per year in the last few years, accounting for a dip in 2020 and partial clearing of backlogs from 2021-2022. (Figure 8)

The absence of any clear increase in judges’ workload, as measured by decision output, can be attributed partly to the increase in the complement of judges from about 42 in 2016-2021 to 48 judges last year. The increase in judges have at least, up to 2025, been able to keep the decisions-per-judge steady.

Does workload correlate to turnaround time?

There is a very weak (but statistically significant) positive correlation between case load (as measured by output-per-year) and median turnaround time, as shown below.

Case output accounts for only about 2% of inter-judge variability. This suggests that workload is not a material contributing factor to decision-making time on an incremental basis. Individual judge characteristics appear far more relevant than workload. This does not account for decision-making quality or other non-output related workload, which is harder to measure.

As an interesting observation, plotting the data this way clearly shows certain prolific decision-writers (rightmost points). The three top Judge-Year spots for reported decisions is all by the same judge (bottom right), indicating both high output and quick turnaround. (Figure 9)

The result might be counter-intuitive but reflects an inherent issue in what we are measuring. Since we do not have data on how judges are assigned cases, output was used as a proxy for workload. However, output is the result of two opposite forces: it may be because a particular judge is assigned more cases (which would support the hypothesis for positive correlation), or it may be that the faster a judge writes, the more cases they will issue in a year (an obvious statement, but would result in negative correlation and reverse the causality).

I attempted to dive deeper into this data by analyzing whether each individual judge’s turnaround time correlated with number of decisions per year. There were only very few (4) judges with statistically significant correlation, and positive/negative correlation was evenly split (about half the judges wrote faster with more cases and half the opposite, though the correlations were largely weak and insignificant). This is effectively indistinguishable from random noise. I infer from this additional analysis that output is not a helpful statistic in analyzing turnaround time.

Whether workload at large (when not measured by output) is a plausible metric that correlates with turnaround time remains unclear to me based on the inability to separate the confounding forces discussed above due to the limitations on the data. However, I do not believe this is likely, unless there is reason to suspect a material systematic issue that would create inter-judge variability between assigned cases and output.

Limitations on workload data

It is important to note some additional limitations of the underlying data. The dataset does not include interlocutory decisions by Associate Judges, therefore their caseload is not measured. As case managers and first-line decision-makers on most procedural motions, Associate Judges feel the brunt of case load more acutely than Justices of the court. Further, there has been a marked increase in the number of cases filed since 2021, which may not all be captured by decisions reported.

The Federal Court publishes statistics which show this stark increase (Figure 10):

The mean time between the filing of a case and first reported decision (non-immigration) is 665 days (median: 439 days).6 We would expect any impact of workload on decision time to appear in the data within about 1.5-2 years, accounting for time under reserve. If this trend holds, then we should expect judges’ workload to increase markedly for the period 2026-2028.

However, there is reason to believe that there is a separate effect of a sudden large increase in case load. Since the number of judges is relatively fixed, as is their output capacity, an increase in case load will result in delays in scheduling a hearing due to judge availability. Maintaining individual judges’ workloads to a constant level will result in longer delay between filing date and final disposition date, without necessarily affecting turnaround time per se.

This effect is asymmetric. An individual judge may experience the same general number of cases and issue decisions at the same general rate (i.e. have a constant workload), but a litigant at the back of the queue feels all of the intermediate delays between filing, scheduling, and decision. As the number of cases filed grows, the backlog grows faster, leading to a multiplicative delay felt by the litigant. This data does not do anything to analyze litigation backlog.

To assess this effect would require more data on delays between filing dates and final dispositions, as well as scheduling delays, which is not simple to analyze with present data.

Stay tuned for the sequel(s)

The next article(s) in this series are anticipated to go into further depth on the impact of types of proceedings, complexity, and other factors.

Update: The sequels have been posted – see Part 2 (subject-matter), Part 3 (complexity), and Part 4 (queue sizes) for more.

Acknowledgements

Three (relatively) recent developments have made it feasible to conduct such an analysis without a disproportionate amount of effort.

First, the Access to Algorithmic Justice (A2AJ)7 project, led by Simon Wallace and Sean Rehaag, compiled an excellent national data set initially published in mid-2025 that contains the full text and metadata for judgments made across Canada. The Federal Court data in A2AJ includes the full text of almost all decisions issued since 2001 that are available on the Federal Court website (however, see limitations on data, below). As it says on the tin, this data has made systematic algorithmic analysis much easier than before.8

Second, some time in the last few years, the Federal Court (via the Courts Administration Service) made some upgrades to its software systems which has made accessing electronic court docket information much more reliable. CAS often does not get sufficient credit for infrastructure upgrades, though more is always needed.

Third, the advent and spread of generative artificial intelligence tools enabling rapid prototyping and revisioning of code (namely, Claude Code and VSCode Copilot) have made it possible to develop better software and attempt larger scale data analysis within a short amount of time. While at this moment, I do not think AI can replace human efforts in proper research and analysis, it is undeniable that such tools (when put to good use) can enable higher quality and quantity of research.

Generative AI was used in this project to: prepare and revise code to access relevant data based on human-coded prototypes; clean and arrange data; and generate the interactive visuals in a rapid iterative manner. All text is my own. Efforts have been made to check the data and visuals for accuracy, although AI was integral to the project. Based on my spot checking and cross-referencing using different methodologies, and based on my understanding of the data and APIs, I believe the data are generally reliable, although this is not guaranteed. (More specifically, to avoid any doubt, generative AI was not involved in creating the underlying data, which already exists from other sources.)

Data Sources

The data specifically used in this study are:

- The A2AJ Open Access Dataset (Federal Court), containing the full text and metadata for more than 35,000 Federal Court decisions since 2001.

- The Federal Court’s electronic court files system, which provides particularized information for each case including in particular, the docket numbers, dates of claim, the nature of proceeding, and individual docket entries.

- CanLII’s API, which provides additional metadata for each judgment not in the A2AJ database including the assigned docket numbers and partly-AI generated case classifications, which were not used here.

I also note that the Federal Court and the Courts Administration Service maintain overall statistics on the number, type, and status of proceedings and publish these statistics on a quarterly and annual basis.9 However, these statistics do not break down individual cases. Other than for general reference, these statistics were not used in my analysis.

Nonetheless, based on the Federal Court’s published statistics about disposition rates and pending cases, I believe they internally track whether decisions are final or interlocutory, as well as other metrics regarding time-to-disposition that are not currently available on a case-level basis. If the Federal Court makes this data more widely available, better analyses may be available.

Notes on Methodology

I focused on the Federal Courts for two main reasons. First, I primarily litigate in the Federal Courts and am much more familiar with the systems and procedures relevant for discussion. Second (and despite its flaws), the Federal Court has a relatively easy-to-access digital docket system and available data for analysis. Unfortunately, data for other superior courts and provincial courts are still spotty, unreliable, or behind restrictive paywalls or strict licensing terms. I support the calls from legal scholars around the country for courts to make it easier to access court information, as it is one (among many) access to justice problems.

Scope: I decided to limit the analysis to the last 10 years to manage the scope. There is no technical limitation (other than additional computing power and time) that precludes extending the study back to 2001. However, in my view this was not necessary as there was sufficient data in the last 10 years to perform statistical analyses, and since most current judges were not on the bench more than 10 years ago, such historical data is not likely to be significantly informative.

The A2AJ dataset contained 14,027 decisions from the Federal Court from 2016-2026 (as of April 6 when the data was processed). Not all of the decisions survived automated classification for various reasons, such as missing hearing dates, typographical errors in the original judgment, and formatting problems. About 13,000 decisions were able to be processed using automated scripts, although some ~500 decisions within this set were missing one or more pieces of information (such as hearing date or judge). Additionally manual effort could make the dataset more complete given sufficient time.

Turnaround time was measured by calculating the number of days between the judgment date and the last hearing date reported in the decision.

Judgment date was obtained from the metadata in the A2AJ dataset, which is generally the publication date of the decision at the Federal Court. There are circumstances where decisions are published later than they are issued and no attempt was made at detecting such edge cases. Additionally, the judgment date is the date of the public decision. If there was a confidential decision issued earlier, that date was not used.

Hearing date was automatically detected using a combination of regular expressions, word searching, manual editing, and AI-assisted review. Most of the script used to detect hearing date was AI-generated via Claude Cowork and Claude Code (Opus 4.6). (The script itself is deterministic since it is a combination of regex and hard-coded word searching and not subject to hallucination.) I spot checked a random sample but did not verify all ~13,000 cases. In the original data, there are typos in the hearing dates reported in the decisions, and the format for reporting hearing dates is not standardized across judgments. As such, they may not be fully accurate.

Filing date was obtained from the Federal Court’s electronic docketing system. These dates are manually entered by the Federal court’s registry office. If a decision involved more than one court proceeding with separate docket numbers, the first docket number was used to determine the filing date.

Nature (category) of case is detected from the Federal Court’s electronic docketing system using the “Nature of Proceeding” field. This field is maintained by the Federal Court and categorized by the registry office. If a decision involved more than one court proceeding with separate docket numbers, the first docket number was used to determine the nature of proceeding.

Limitations and Caveats

- The A2AJ dataset generally does not include decisions of Associate Judges (formerly Prothonotaries), as these decisions are either: (1) not assigned a neutral citation number or (2) not available on the Federal Court website. The Federal Court only publishes final decisions or interlocutory decisions it deems to be precedential.10 While the Court provides these judgments to CanLII, they are not otherwise available without manually requesting them from the Registrar.

- CanLII is the only public source of Associate Justice decisions but they maintain a restrictive no-scraping policy. Their public API does not contain the full text of decisions, therefore these decisions are not currently easy to analyze. The A2AJ project has identified this issue and has advocated for better access.11 I support these calls for better access, given CanLII’s public funding and mandate.

- There is no simple way to determine whether a decision is interlocutory or final. It would be nice to be able to split decision time analysis between the two, but this is not yet possible without significantly more computing power or time.

- The number of decisions that are reported and published by the Federal Court represent a small fraction of overall dispositions made by the Court. For example, the Federal Court reports that it made a total of 20,315 dispositions in 2025 alone, compared to 1,605 decisions published on the Federal Court website and in the A2AJ database. Most of these additional dispositions are likely interlocutory orders (although there are many that are unpublished for other reasons) and would have a significantly different turnaround time profile. It should be obvious that analysis of published decisions only represents a form of selection bias, although it is the best I can do with current tools.

Footnotes

- ‘Extraordinary’ surge in immigration cases in Federal Court challenges access to justice, top judge says | CBC News ↩︎

- Federal Court, Practice Direction and Special Order: Proceedings under the Immigration and Refugee Protection Act and the Citizenship Act, Backlog in processing applications for leave and judicial review (14 May 2025) ↩︎

- https://nationalmagazine.ca/en-ca/articles/law/judiciary/2025/calling-on-the-feds-to-close-the-federal-courts-funding-gap ↩︎

- http://www.cbc.ca/news/politics/access-to-justicefederal-budget-2021-requests-1.5989872 ↩︎

- Eli Lilly Canada Inc. v. Hospira Healthcare Corporation, 2016 FC 1218 and Biomarin Pharmaceutical Inc. v. Dr. Reddy’s Laboratories Ltd., 2021 FC 402 ↩︎

- Note that due to the lack of distinction between final and interlocutory decision, as yet I cannot evaluate mean time until “final” disposition. Due to technical limitations (API access limits), I could not readily calculate the average time between filing and disposition for all immigration matters. ↩︎

- Access to Algorithmic Justice (A2AJ) ↩︎

- While it was possible to scrape the Federal Court website directly, as A2AJ has done, it was an often tedious task and fraught with issues due to the Court’s publishing platform and systems. A2AJ is the first open-access database of its kind that I am aware of. ↩︎

- https://www.fct-cf.ca/en/pages/about-the-court/reports-and-statistics/courts-administration-service-publications ↩︎

- https://www.fct-cf.ca/content/assets/pdf/base/Notice%20to%20the%20Profession%20-%20publication%20of%20decisions%20final%20(ENG)%20final.pdf ↩︎

- Wallace & Rehaag, Introducing the A2AJ’s Canadian Legal Data: An open-source alternative to CanLII for the era of computational law, online at https://arxiv.org/abs/2509.13032 ↩︎

Leave a Reply